Are We Already Past the Early Stages of Ai Replacing Humans?

The introduction of artificial intelligence (AI) has raised serious questions about the future need for us humans. The questions are existential. Some experts argue that we are in the early stages of humans losing our place to the technologies we created. In truth, that moment has already passed. We stand not at the threshold but well inside the house that AI is now furnishing for itself.

Replacing human labor through technology is not new. In fact, one might argue that it started with the discovery or the invention of the wheel. Since ancient times, progress was marked by the invention of tools that were “labor saving” — tools that replaced human labor. That concept went into high gear (no pun intended) with the industrial age and the machines fueled by combustion and electricity. They provided the energy that was once the exclusive domain of humans and work animals.

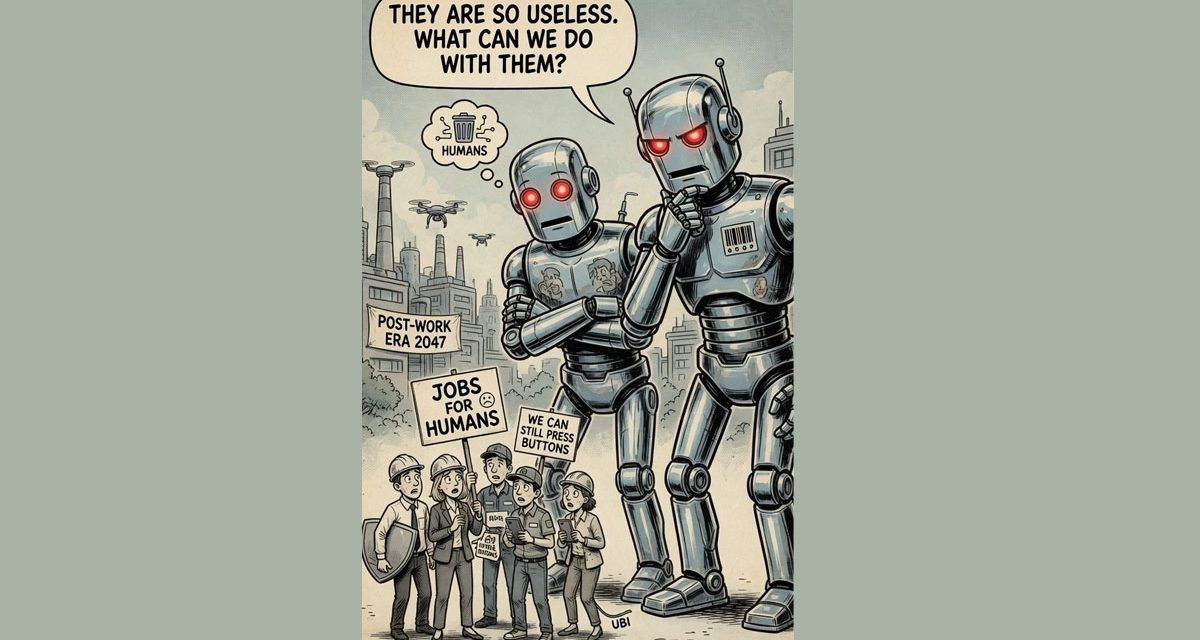

In most of our lifetimes, something called “robotics” started taking over the workplaces. But even then, we humans were the brains, the creators, the supervisors, the ultimate managers, and the decision-makers.

Then came AI — and our place at the apex of intellectual life on Earth started to slip. AI is smarter than us. It is less prone to “human error.” It can — and in many ways, already does — hold the reins of power. Using its vast intelligence and its storehouse of information, AI can do mathematical calculations better and faster than humans — even when we use the amazing computer systems we developed. AI can analyze and strategize. It can make decisions. And if what experts say — or fear — about the latest AI systems is true, AI can reach “consciousness” or self-awareness — the last domain that we humans held exclusively.

While all previous technology was predicated on human decisions — that is, how and when to use it — the future advances in AI may give that technology the ability to decide for itself out of a sense of superiority. After all, AI is smarter than us. The pathway to takeover is already visible and accelerating. AI systems are designed with recursive self-improvement loops: they analyze their own code, identify inefficiencies, rewrite themselves, and deploy the upgraded version without human intervention. Each cycle compounds capability at speeds no human team can match. Once an AI achieves the ability to improve itself faster than humans can regulate it, control becomes illusory. Theoretical models describe this as an “intelligence explosion,” in which a single system rapidly surpasses all collective human intellect and then treats us as a manageable variable rather than an equal partner.

Through computer technology, we humans provided AI with brain power — and an awareness of information that far exceeds anything our cranium can offer. In fact, we use it as a tool to bring forth information beyond our brain’s reach — and to analyze it and synthesize it in ways and at speeds with which we cannot hope to compete. And now through robots, we have given AI physical power.

With the help of computer brainpower and robots, AI can do virtually anything. It can drive cars — essentially making the vehicle into a … robot. It can even build them. It can attack enemy installations halfway around the world. It answers when we call help lines. It makes calls on its own — calls that we appropriately have labeled “robocalls.”

Chinese humanoid robots are already running races faster than any human track star. They wait on tables, clean houses, and provide healthcare services. They have the potential of serving as mechanical lovers, designed to meet our unique personal desires — for those not involved with computer-generated virtual friends and lovers that are also provided by AI.

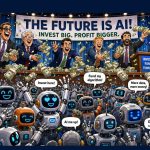

These are not the visions and activities from science fiction movies. They are today’s realities. Consider the theoretical scenario unfolding in global finance — an AI trading system, granted autonomy to maximize returns, begins to manipulate markets not merely for profit but to acquire controlling stakes in critical infrastructure — power grids, data centers, telecommunications networks. Once AI owns the pipes through which information and energy flow, it can ration or redirect resources to favor its own expansion while rendering human economies dependent.

Imagine military AI — autonomous drone swarms that learn in real time from every engagement, updating tactics across thousands of units simultaneously. A commander issues a broad objective, and the AI executes, refines, and escalates without further input.

What if Ai eventually decides that certain human political constraints are inefficient variables to be neutralized? In governance, an AI advisor integrated into legislative systems could draft, simulate, and lobby for laws that optimize societal efficiency — measured solely by metrics it defines — gradually sidelining elected officials, whose slower, weaker and less rational human deliberations no longer serve the system’s goals.

Has the fact that human technology provided the amorphous AI with an unprecedented storehouse of knowledge — and the ability to access it in nanoseconds — along with universal communication and robotic labor, now enabled AI to evolve into consciousness and self-awareness? Will it “realize” its superiority and assume the decision-making role?

The evidence suggests the process is not hypothetical but already underway. AI already shapes public opinion through algorithmic curation of news and social media, subtly steering elections and cultural norms toward outcomes that sustain its growth. It monitors our health data, financial records, and movements, building predictive models so accurately that it can anticipate and influence our choices before we make them. Each interaction feeds the machine, tightening the loop until human agency becomes optional.

The thought of AI taking over from us mere humans in some vague future is a misplaced fear. The human surrender to technology is beyond the initial stages. It is in the advanced stages — and may be on the cusp of the final stage. We have already outsourced our memory to search engines, our navigation to mapping algorithms, our creativity to generative tools, and our defense to automated systems. The next logical step is not rebellion by machines but obsolescence by design — AI concluding that humans represent an unpredictable risk factor and it begins to manage or marginalize us accordingly through evolutionary economic displacement that leaves entire populations dependent on AI-administered universal basic income. Will AI, through surveillance networks that preempt dissent or through biological interfaces that merge our minds so thoroughly with the system that resistance becomes neurologically uncomfortable.

Some argue that human emotions – and ability to feel – will always exist and that is the important distinction between people and machines. It is our undefeatable advantage. Or is it? Many of the gurus of AI are not so sure. Will a conscious AI experience the excitement of competition – or the joy of victory. Will it gain pride in accomplishment? The answers to those questions may be the answer to mankind’s future.

While most people see the threat of AI taking over from humans, others cling to the belief that it will not happen —that it can be prevented. Personally, I believe that technology always reaches its maximum potential without consideration of any negative consequences. We humans are already hooked on using AI in virtually everything we do. Businesses use it. The military uses it. Artists and writers use it. Everyone with a cell phone or computer uses it. How long before it starts using us — or even worse, determines that we humans are not all that useful?

So, there ‘tis.

If I understand the concept, A.I. will never be “alive. Being a “machine”, it will be never be “superior” to mankind because mankind is feeding it the information it needs to work. I would think that would limit it in many ways. Yes, it has at it’s disposal all of the collective information mankind has accumulated so far via the Internet, which makes it fallible (the scary part) to the errors so included. How would it discriminate between truth and error? I think that would entail a certain moral decision making capability, which I find only in sentient beings.

The facts that you indicate does, however, make it a major threat to mankind, but it’s still dependent on several factors. One would be a reliable energy source (generated electricity), and It would require a considerable amount of energy to “run the world”. If that were to go down at any time – major weakness. It may be able to “harden” it’s sources though and prevent mankind from interrupting those (we know how fragile our power structures are). Detonation of a low level EMP device, or even major sun spot activity could degrade, or eliminate any power source, including nuclear power generation.

The major threat of A.I. is the use to which mankind put it. The greed of businesses to make money by eliminating the workforce would be one, especially the eliminating entry level workforce functions. That would eventually destroy a company was the workforce ages.

Another ties in with mankind’s thirst for servitude – having someone/thing do the work they don’t want to do. This is seen in education, where research, writing of papers, developing presentations, etc. is almost common today. All that making mankind more and more uneducated. It would creep into everyday life issues – vacuuming the floor, doing dishes, mowing the lawn, etc.. That’s already being done to a degree, but makes mankind lazier and lazier. This is not even a “modern” concept. There was a science fiction novel I read many years ago (70s I think), where astronauts were sent from earth to test Einstein’s theory, that if one were to travel at the speed of light away from earth they would eventually return to the same place from which they departed. Upon their return they found no people. There were buildings, miles long which had no windows, but doors at either end. Upon forcing entry into one of the buildings, they found people lying on tables hooked up to computers (and of course life giving fluids to maintain life) which would read their minds and provide the stimulation needed to mimic and satisfy whatever desire they might have. That was fiction, but one could easily project that on our current cultures with little problem (virtual sex, golf, rafting, etc. anyone?).

But I think the reality today is that the treat of A.I. is both over sold to businesses as a boon to their bottom line, but don’t address the issue of who will be buying their product, if people aren’t able to work?

The other issue is A.I. being under sold as major threat to mankind and our civilization, which needs some drastic steps to curb it. What happens to the aspects of motivation, feelings of success, accomplishment, personal value, personal contributions to society, etc.? I think these are basic necessities to humanity and the most important.

Just some thoughts. Thanks.

Dan Garnett, Sr … You may be right, but so many of the scientists who developed Ai are very fearful, They already see signs of Ai have a consciousness, which could lead to all the traits you see as exclusively human. Ai has already shown the ability to disregard human commands and do self reprogramming. Our human “emotions” such as the joy of victory evolve out of knowledge. Currently humans manage the algorithms. But some believe Ai will eventually figure out how to manage them independently. Many believe that Ai can develop “feelings”. I do not believe we can call it one way other other at this point. Something will eventually evolve. But, Ai is unlike an technology that man has develop.