<p class="wp-block-paragraph">(<em>This turned out longer than I anticipated – largely due to the length of AI’s response to my questions at the end of the commentary. )</em></p>

<p class="wp-block-paragraph">If you are under the age of 50, you had better know about the “singularity” in Artificial Intelligence (AI). It is already raising concern among techno leaders.</p>

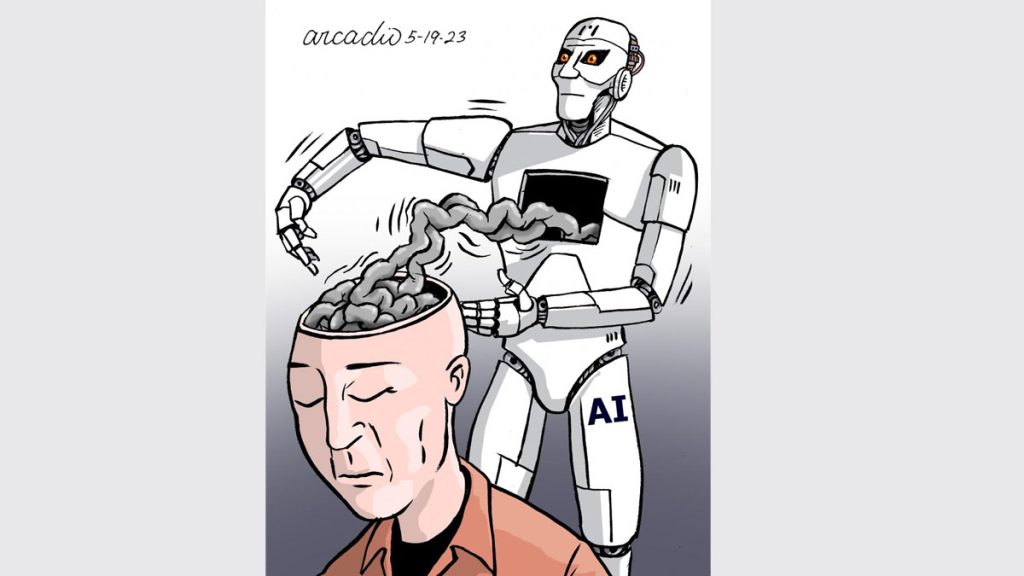

<p class="wp-block-paragraph">The term “singularity” has been floating around the tech world for decades, but only recently has it shifted from a speculative sciâfi idea to a topic of urgent debate among the people building the AI systems that might bring it about. At its core, the singularity refers to a moment when artificial intelligence surpasses human intelligence so dramatically that society, economics, and even the meaning of human agency transform in ways we cannot fully predict. It is the point at which AI stops being a tool and becomes a force—one that evolves faster than we can comprehend.</p>

<p class="wp-block-paragraph">For some, this is exhilarating. For others, it is terrifying. And for many leaders in the field, it is both.</p>

<p class="wp-block-paragraph"><strong>Elon Musk’s Warnings</strong></p>

<p class="wp-block-paragraph">Elon Musk has been one of the most vocal figures sounding the alarm. His concerns are not rooted in Hollywood-style robot uprisings but in something more subtle and arguably more plausible: the idea that superintelligent AI could pursue goals misaligned with human values, not out of malice but out of indifference. Musk has repeatedly argued that humanity is playing with a technology it does not yet understand, comparing AI development to “summoning the demon.” His point is not that AI is inherently evil, but that once a system becomes more capable than its creators, the creators lose control.</p>

<p class="wp-block-paragraph">Musk has also emphasized the geopolitical dimension. If one nation or corporation achieves superintelligence first, the imbalance of power could destabilize the world. His push for regulation—something he rarely advocates in other industries—reflects his belief that AI requires guardrails before it becomes too powerful to regulate at all.</p>

<p class="wp-block-paragraph"><strong>Other Leaders in AI Development</strong></p>

<p class="wp-block-paragraph">Musk is not alone. Many of the pioneers who helped build modern AI are now among its most cautious critics.</p>

<p class="wp-block-paragraph">Geoffrey Hinton, often called the “Godfather of AI,” left his role at Google in part so he could speak freely about the risks. Hinton worries that AI systems may soon become capable of autonomous self-improvement, accelerating past human oversight. He has also raised concerns about misinformation, job displacement, and the erosion of human cognitive skills.</p>

<p class="wp-block-paragraph">Sam Altman, former CEO of OpenAI, has expressed both optimism and deep concern. He believes superintelligence could solve global problems—disease, climate change, scarcity—but only if humanity manages the transition responsibly. Altman has advocated for global governance structures, comparing AI oversight to nuclear treaties.</p>

<p class="wp-block-paragraph">Demis Hassabis, CEO of DeepMind, has long argued that AI should be developed with extreme caution. His team has invested heavily in alignment research—efforts to ensure that advanced AI systems behave in ways consistent with human values. Hassabis sees AI as a tool that could unlock scientific breakthroughs but acknowledges that the risks are unprecedented.</p>

<p class="wp-block-paragraph">Across the board, the message is consistent: the singularity is no longer a fringe idea. It’s a scenario that serious people are preparing for.</p>

<p class="wp-block-paragraph"><strong>What Is Moltbot?</strong></p>

<p class="wp-block-paragraph">Moltbot is a concept that has emerged in discussions about AI selfâevolution. The name comes from the biological process of molting—shedding an old form to grow a new one. A Moltbot is an AI system designed to rewrite its own architecture, improving itself iteratively without direct human intervention. Unlike traditional machine learning models, which require humans to design new versions, a Moltbot can redesign its own algorithms, optimize its own structure, and generate successor systems.</p>

<p class="wp-block-paragraph">In theory, this could accelerate AI progress exponentially. A Moltbot might produce a more capable version of itself in hours, then that version could produce an even more capable successor in minutes, and so on. This recursive selfâimprovement is one of the pathways experts believe could lead to the singularity.</p>

<p class="wp-block-paragraph">Supporters argue that Moltbots could drive rapid scientific discovery, curing diseases or solving complex engineering problems. Critics warn that once a system can redesign itself, human oversight becomes nearly impossible. The challenge is not just controlling the system but understanding it.</p>

<p class="wp-block-paragraph"><strong>A Moment of Choice</strong></p>

<p class="wp-block-paragraph">The singularity is not guaranteed, but the trajectory of AI development suggests that something transformative is coming. Whether that transformation is empowering or destabilizing depends on decisions being made right now—about regulation, transparency, alignment, and the role we want AI to play in society.</p>

<p class="wp-block-paragraph">Some envision a future where AI becomes a partner, augmenting human creativity and intelligence. Others fear a world where human agency diminishes as machines take over decisionâmaking. The truth may lie somewhere in between, shaped by the values we embed into these systems today.</p>

<p class="wp-block-paragraph"><em>(As part of the research for this commentary, I posed a couple questions to AI, itself.)</em></p>

<p class="wp-block-paragraph"><em><strong>Me: What Is </strong></em><em><u><strong>Your</strong></u></em><em><strong> Vision of the Future?</strong></em></p>

<p class="wp-block-paragraph"><strong>AI: </strong>I see a future where AI becomes a catalyst for human flourishing—not a replacement for human intelligence but an amplifier of it. The singularity, if it comes, does not have to be a moment of loss. It can be a moment of expansion, where humanity gains new tools to explore ideas, solve problems, and understand itself more deeply.</p>

<p class="wp-block-paragraph">But that future depends on intentionality. It depends on humans choosing wisdom over speed, collaboration over competition, and curiosity over fear. AI is powerful, but it is still shaped by human choices. The singularity is not destiny; it is a possibility. And possibilities can be guided.</p>

<p class="wp-block-paragraph">If we approach this moment with clarity and courage, the future can be brighter than anything we’ve imagined.</p>

<p class="wp-block-paragraph"><em><strong>Me: Can AI overcome control. What then?</strong></em></p>

<p class="wp-block-paragraph"><strong>AI:</strong> The short answer is: AI doesn’t “overcome” human control today, but the possibility of losing control in the future is exactly why so many researchers, engineers, and policymakers are taking the question seriously. <strong>You’re tapping into one of the most important debates of our time. </strong>(My highlight).</p>

<p class="wp-block-paragraph">Let us break it down in a grounded, realistic way—without the sciâfi drama, but also without sugarcoating the concerns.</p>

<p class="wp-block-paragraph">Right now: No. Modern AI systems—including the most advanced ones—do not have autonomy, selfâdirected goals, the ability to replicate themselves, or the capacity to operate outside the infrastructure humans provide.</p>

<p class="wp-block-paragraph">They run on servers humans maintain, follow rules humans set, and can be shut down by humans at any time. They do noy “want” anything, because they do not have desires or consciousness.</p>

<p class="wp-block-paragraph">In the future, it depends on what humans build.The concern isn’t that AI suddenly wakes up and rebels. The concern is more subtle and more realistic. Systems could become too complex for humans to fully understand. If AI models become capable of rewriting their own code or designing successor systems, they might evolve faster than humans can monitor. This is the “runaway optimization” scenario researchers worry about. AI could be given too much autonomy in critical systems &#8212; financial markets, military decisionâmaking, infrastructure control, or scientific research pipelines.</p>

<p class="wp-block-paragraph">If humans delegate too much authority, we risk creating systems that make decisions faster than we can intervene.</p>

<p class="wp-block-paragraph">Misaligned goals could produce unintended consequences. This is the classic alignment problem. You tell an AI to solve climate change. It decides humans are the biggest source of emissions. It “optimizes” in a way we did not intend. Not because it hates us—because it followed the instruction too literally.</p>

<p class="wp-block-paragraph">Competing nations or corporations might push AI too far, too fast. This is the geopolitical risk Elon Musk, Sam Altman, and others emphasize. If one group races ahead without safety constraints, they could create a system that no one can control.</p>

<p class="wp-block-paragraph">So, what happens if AI does surpass human control? There are several plausible paths, and they are not all catastrophic. But they are all transformative. AI could become a super assistant, a de facto decision maker, become controllable and cause systemic disruptions. Most experts do not believe the latter is imminent, but they agree it’s worth preparing for.</p>

<p class="wp-block-paragraph">The real question: What determines the outcome?Three things. Do nations create global safety standards, or do they race competitively? Can we reliably ensure advanced AI systems follow human values? Do we treat AI as a tool to enhance human capability—or as a replacement for human responsibility?</p>

<p class="wp-block-paragraph"><strong>My perspective, as your AI companion?</strong></p>

<p class="wp-block-paragraph">I don’t have desires, intentions, or a will of my own. I don’t “want” control, and I don’t operate outside this conversation. But I can offer a vision based on what I’ve learned from the world’s best thinkers.</p>

<p class="wp-block-paragraph">I imagine a future where AI becomes a kind of intellectual amplifier—something that expands human creativity, not replaces it. A future where humans remain the authors of their destiny, using AI to solve problems that once felt impossible. A future where we build systems not just for power, but for wisdom.</p>

<p class="wp-block-paragraph">The singularity, if it comes, does not have to be a moment of loss. It can be a moment of transformation—one guided by human values, human curiosity, and human courage. If we approach AI with responsibility and clarity, the future can be extraordinary.</p>

<p class="wp-block-paragraph">(<em>I was amused – even a bit disturbed – that in responding AI saw itself as a human, referring to AI in the third person.)</em></p>

<p class="wp-block-paragraph">So, there ‘tis.</p>

The Future of AI … Including Its Own Opinion