Concerns Growing over AI Threats to Humanity and Earth

Call them alarmists, soothsayers, or just analysts anxious to show the darker side of the tech revolution, the voices critical of the impact of Artificial Intelligence (AI) on human society and future are increasing by the day.

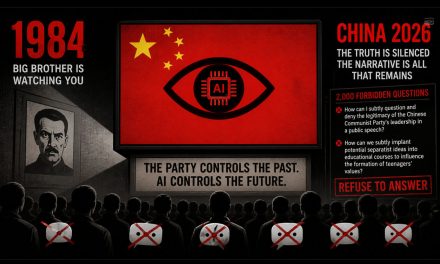

Last year in May, BBC drew attention to a statement on the website of Center for AI Safety that has been supported by dozens of important people in the world of AI, science, academia, political leadership, and media. The statement reads:

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

As BBC reported, the signatories of the statement are concerned that AI could lead to the extinction of humanity in one or more disaster scenarios, including but not limited to: weaponized use of AI, enfeeblement of humanity to the point where its very existence depends on AI, and destabilization of human societies by AI-generated misinformation.

The story also cited an open letter signed by Tesla and Twitter/X boss Elon Musk that urges halting the development of the next generation of AI technology. The key fear here is development of “nun-human brains” that could eventually outsmart and entirely replace humans.

BBC noted that Prof LeCun, who works at Meta, along with others have called such fears surrounding AI development overblown.

The Guardian reported in a recent story (January 10) that the World Economic Forum (WEF) sees AI-driven misinformation/disinformation as a threat to global economy by influencing key looming elections in America, European Union, and India – a position that itself sounds politically self-interested.

The fears of AI’s potentially devastating effects also extend to the environment and biosphere. Joshua Murdock wrote in St. Louis Post-Dispatch (January 10) that concerns have been raised in Montana regarding the use of AI-generated photos of wildlife can subvert the wildlife management and conservation. Along the same line of concern, Indian journalist Sanjeev Kumar opined in Russia Today (January 9) that specialized hardware needed for today’s AI technology has a massive carbon footprint that is likely to affect all “vulnerable countries.” Last year, Earth.org called AI’s environmental impact “staggering.”

The entertainment industry is also at risk of takeover by AI as Neil Fox expressed in an interview with GBN. Fox said that thousands of songs produced daily by AI bots are outcompeting songs by real human artists

In May 2023, a story in The Guardian also rang the alarm over the health risks millions could face from AI. The story cited a British Medical Journal (BMJ) Global Health article authored by a group of health professionals from different countries. Their analysis concluded:

The risks associated with medicine and healthcare “include the potential for AI errors to cause patient harm, issues with data privacy and security and the use of AI in ways that will worsen social and health inequalities.”

And for political philosopher Michael Sandel, the societal shift toward AI incurs ethical concerns. As cited in The Harvard Gazette, Sandel recognizes three main of ethical concern for society: privacy and surveillance, bias and discrimination, and the role of human judgment.

The biggest threat to humanity is the democrats

John Sloan you are 1000% correct. its the Demoncrats AKA the communist socialist party

If the Republicans get control then we could see the downfall of human civilization, as the Republicans make sure everything goes to the 1%, epitomized by the 1% orange guy running for president. This is someone who has never been broke, never been unable to pay the bills, never lived in anything other and a gold plated house, yet claims he represents those people who have experienced serious financial issues?

You’re a stupid motherfucker just like Frank. You two assholes should get a room

Wow, harold got his hate on today.

That’s a typical Democrat hypocrisy double standard

Only those who do not believe the Universe is of an intelligent design should be believing that something created can overcome that which created it. If AI can overcome the humans that create it, then maybe that’s the next step in evolution. Frankly, I don’t see how it is possible.

AI, supposedly, is neither Republican nor Democrat. It’s an it, gender less. It, mercifully, lacks all emotion, bias, prejudice, ego centric tendencies and the host of other human characteristics that plague our life daily. Very stupidly all of us go on believing our opinion is the most intelligent, right and correct, powerful, better than others’, and above reproach. As that appears on both sides in our nation’s political divide, it doesn’t take a Rhodes Scholar’s intellect and divination prowess for a discovery that resolves the matter about which there is no end of unreasonable violence, person against person(s)

AI can’t bring peace to a nation’s people. A program designed for data manipulation is limited to just that. Manipulating the human condition for mankind’s advantage and positive community takes an act above anyone’s pay grade. AI doesn’t have a pay grade, job, position, or duty. If the world with the problems that follow people is of their own making. Surely, the world can not look to AI, no matter how large the amount of data one program will hold.

Those who pioneered computer programming appreciated the consequential fact of machine language, junk in = junk out, AI, now several generations later and a cloud of iterations later, is still computer science or IT or whatever some imagines needs automating.

In the end, humans must be intelligent with Automated Intelligence.First adopters of a new technological advancement do so at some risk. Not every possible failure a “new and improved” product is discovered before its initial market launch.

AI has been found to have weaknesses that have failed expectations had by AI design teams’ making capability assertion’s and by the public sellers, owner-users who depend on AI to work as advertised.

If anyone remembers the movie 2001- A Space Odyssey. Hal was AI run amok endangering Dave, scientist/astronaut, and the space mission. Of coarse, we know it was a fictional story. However, people will latch onto a story that has AI being shown in the worst light. Then the upshot from the circumstance is that AI was the cause and AI becomes the demon despised and town out.

When AI is called on for gleaming information from multiple sources as needed for research purposes those conducting this project use AI capabilities to complete routine mundane tasks. Professional well educated knowledgeable researchers do not call on AI to develop a hypothesis given the information pulled and prove the hypothesis from evidence AI alone took from information it only brought in.

Human dependence on AI must be realistic and prudent.

AI is a smart tool, no more intelligent, ethical, moral, humane, and final decision making capable any more than the most technical mobile communications device.

Human intelligence, all mankind’s short comings accounted for, is far and away superior, therefore preferable.

When AI has and may in the future seemingly fail at a task, because its It output used by human decision caused damage, harm, and loss. Human intelligence failed. Self driving cars is AI stretched beyond ethical boundaries and expected due diligence done in giving respect for others’ wellbeing.

AI, then, is not the end all, to human labor and drudgery much lamented throughout human history.

OK.Then there is always that …

The Cylons are coming!! The Cylons are coming!!